Ever wonder why there are many hidden features on iPhones? (after all, what is a point of a feature if it is hidden, right?) Ever wonder why a four-year-old toddler could figure out iPhone hidden features faster than you?

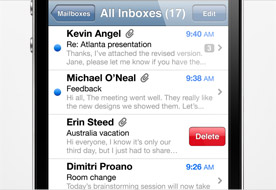

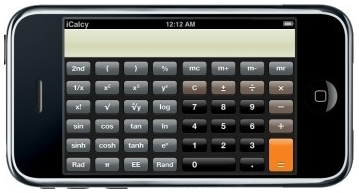

When I got my first iPhone, I didn’t know about the “swipe gesture” to delete an email until many Googlings later. Not until many weeks later, I found out that the basic calculator when turned landscape became a full scientific calculator. Wonderful! Actually, I didn’t figure it out by myself. I bought an iPhone tips and tricks book.

Some other features I couldn’t figure out by myself include:

- Scroll to top fast: tap on the status bar at the top of the screen.

- Type special characters: tap and hold on some keys.

- Adjust audio and video scrub rate from high-speed to fine: drag your scrubbing finger down. I bet you didn’t know about this either.

If you are like me, make sure you check out the

iPhone Tips and Tricks page from Apple.com. I’m sure it will surprise you.

On the contrary to my struggles, my four-year-old son had no problem with Apple UI. When I got my iPad, I just downloaded some games, setup my Netflix and handed it to him. He never came back asking for instruction, direction, tip or trick. And it is not just my son. Check out this TechCrunch video:

Could There Be A Better Advertisement For The iPad?Why is that? Why did Apple hide those wonderful features? Why did Apple (UI) make it hard for me but easy for my son?

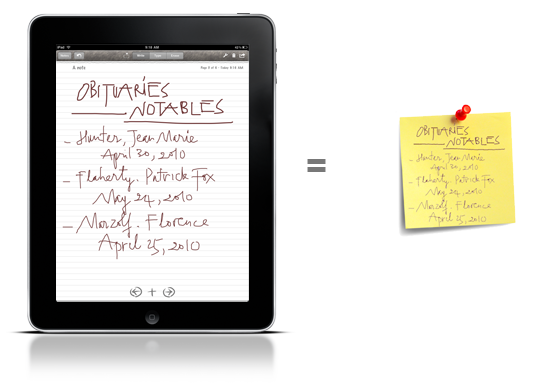

This post is my observation of a new trend in UI design that focus on being natural, but not intuitive. It is also about how this new trend affects me and my app:

Notes Plus.

The Principle of Feature Exposure

Since the inception of Apple’s iPhones, with a touch screen interface, Apple has broken a few design principles written in traditional UI books [

MAYHEW91,

COOPER95,

GALITZ02]. One most noticeable design principle of those Apple has broken, which this post will focus on, is the Principle of Feature Exposure [

TALIN98].

The ground of the Principle of Feature Exposure (

let the user see clearly what functions are available) is based on Myers-Briggs personality classification of the Sensibles and the Intuitives [

MYERS-BRIGGS]. While the Intuitives are comfortable with abstract models, the Sensibles prefer UI with “up front” and “in their face” features. According to some psychological studies, the “Sensables” outnumber the “Intuitives” in the general population by about three to one [

TALIN98]. Thus, to please the general audience, computer software UI should have clearly-exposed features. In fact, this principle is one of the core UI design principles for Web pages.

While Apple is brilliant on other UI design principles (principle of metaphor, principle of aesthetics, etc.), it completely ignores this principle of feature exposure. Why?

Natural versus Intuitive

Let me say it up front: Apple UI focuses on being natural, but not intuitive. The word “Intuitive” in dictionary is defined as “obtained through intuition rather than from reasoning or observation“. Intuitiveness is good for UI; it saves users time thinking. The problem is: intuition changes from person to person. Your intuition might not be my intuition. It might be intuitive to you but not to me. There is a “training” factor that makes people intuition varies. Using a computer mouse is intuitive because people get plenty of training on it. However, for a person who has never used it or for a toddler, it is not intuitive at all. A button with big text: “Stop” could be intuitive for people who understand English. For people who don’t, it is a dead end (with no possible guess).

On the other hand, being natural is being universal (to the human race). Touch, push, grab, or mentally associating signs (color, drawing) with meanings are natural. A big “Don’t walk” sign is not natural for people who don’t read English. A red, flashing sign with a walking-person picture is natural. People associates red with fire, flashing with thunder (thus, danger), walking person with themselves.

Still confused about the two, give a UI to a toddler to test it. If he could figure it all out by himself, the UI is natural. Now, this is an important question: Why did Apple focus on being natural versus intuitive? Or even more important, why did it work wonderfully?

A new kind of user training – There’s no training, just play with it

When Apple released the first iPhone, they didn’t pack it with a user manual. In fact, not until recently, they provided a soft user manual hidden in Safari bookmarks. With a brand new product and the first of its kind, you would think that iPhone needs a user manual. Why didn’t Apple provide one? I think Apple did this intentionally. They want to send a message to their users: “the toy is natural to use, you don’t need a manual, just play with it“.

There is a paradigm shifting in user training between traditional computer software and this Apple new trend. In traditional software, users are expected to understand and master all the steps before performing a task: “Go to File menu, under Printer Setup, choose Landscape”, “Don’t push that button, it’ll blow you up! “, etc. Imagine giving these kinds of software to a toddler, they’ll mess up everything. In the new Apple products, users don’t need to know everything up front. The more they play with it, the more interesting things they find out.

Combining this new kind of user training with a UI that focus on being natural, it actually makes sense. Users (or toddlers) will figure it out, even though it’s not intuitive up front. The point here is: play with it, not just use it.

What’s in it for me (and my users)?

Enough with all the mumbling about UI design principles, what’s in it for me and my users? Apple has all the luxury to dictate and revolutionize their users’ behaviors. They have huge marketing budgets and they have reputation. I don’t. I cannot just tell my users: “hey dude, just play with it; look at how your son do it”. I have to please my users and bend their behaviors very gently.

The very first principle in UI design is the Principle of User Profiling –

know who your users are [

TALIN98]. My users (for my app

Notes Plus) are tech savvy and early adopters (after all, they own iPads). My typical user is either a working professional or someone in academia (a student, a scholar, a professor, etc.).

These characteristics make it both easy and hard for me to try new UI concepts and behaviors. Being tech savvy, my users won’t have problems of trying new things. That makes it easy for me to introduce new UI concepts such as gestures to select, delete, scroll, etc. On the other hand, being working professionals, using computers for five or more hours a day, my users are very accustomed to traditional UI components such as: menus, buttons, dialog boxes, etc. As a consequence, they expect the UI reacts similarly to what they are accustomed to. If they don’t see a familiar reaction, they easily get frustrated. That makes it hard for me to introduce new UI behaviors.

What do I do? I introduce new UI with a way to rollback to traditional UI if users don’t like it. For example, in traditional UI, my users are very accustomed to changing “editing modes”. To draw, tap a “Draw” button to switch to drawing mode. To select and re-arrange, tap a “Select” button to switch to select mode. While this might work fine for desktop/laptop computers, it is kind of awkward for tablets because one must move his hand away from the content area to the location of the “switching modes” buttons. Besides, after switching to select mode, he has to start dragging to select. That is an extra step.

I introduced a gesture to just circle around an area to select objects within, while in drawing mode. Users also have an option to undo the selection if they meant to draw a circle, not to select. While many users like this selection gesture, many others couldn’t figure how to select and get frustrated. I have to offer an option to turn off selection gesture. Once turned off, a “switch to selection mode” button is presented on toolbar so that users can tap on it to switch modes.

Ending

It is really fun for me and for my four-year-old toddler to play with Apple products. Maybe for the last 50 years of computing, we’ve been trained wrongly about how to interact with a computer user interface. Maybe we should be more playful, adventurous and less serious when interacting with a computer. As for me, when I have another Apple product, I will try to poke, shake, grab, turn, or even throw and catch to explore their UI.

Viet Tran

October 9, 2010

Reference:

[

MAYHEW91]: Deborah J. Mayhew – Principles and guidelines in software user interface design (page 6-28) – Prentice-Hall, Inc. – 1991

[

COOPER95]: Alan Cooper – About Face: The Essentials of User Interface Design (page 15) – John Wiley & Sons, Inc. – 1995

[

GALITZ02]: Wilbert O. Galitz – The Essential Guide to User Interface Design: An Introduction to GUI Design Principles and Techniques – Second Edition (page 41-51) – John Wiley & Sons, Inc. – 2002

[

TALIN98]: Talin – A Summary of Principles for User-Interface Design (http://www.sylvantech.com/~talin/projects/ui_design.html) – Unpublished – 1998

[

MYERS-BRIGGS]: Wikipedia – Myer-Briggs Type Indicator (http://en.wikipedia.org/wiki/Myers-Briggs_Type_Indicator)